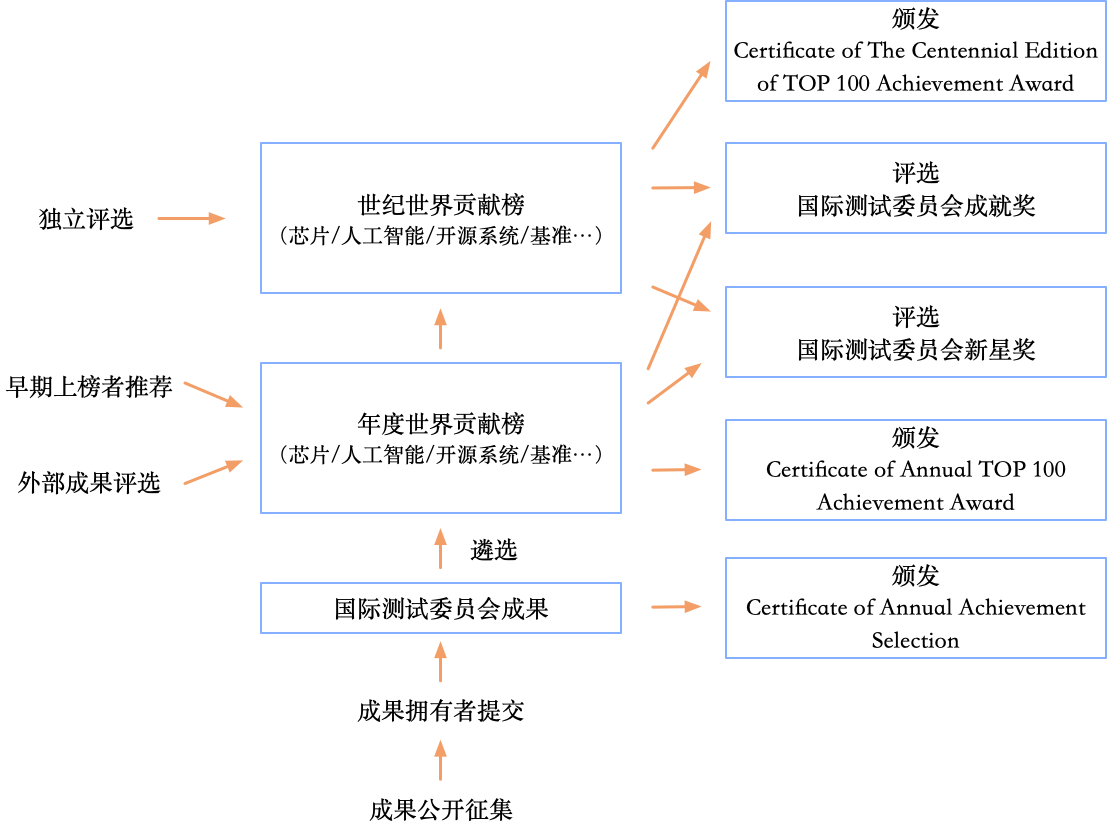

为了避免错过重要的成就,BenchCouncil正在征集人工智能、芯片、开源、基准测试和评估等领域的成就。经过初步评估后,成就的提交者将有机会在FICC 2023会议上展示或展示他们的工作,并有机会进入最终排名。

十大好处

1. 提交的成就将被包括在BenchCouncil的年度成就报告中,并将被引用。提交者需要提供有效的引用格式,包括论文出版物、代码仓库等。编制的年度成就报告将正式发表在BenchCouncil Transactions上。

2. 成就的提交者将获得年度成就英文证书。例如,芯片类别的证书将被命名为“2023年BenchCouncil芯片成就选择证书”。证书将显示个人和机构名称。

3. 成就将在BenchCouncil成就评估的官方英文网页上展示 (/evaluation/).

4. BenchCouncil官方英文网页将提供成就的链接。提交者应确保链接内容真实、非欺骗性和准确性。

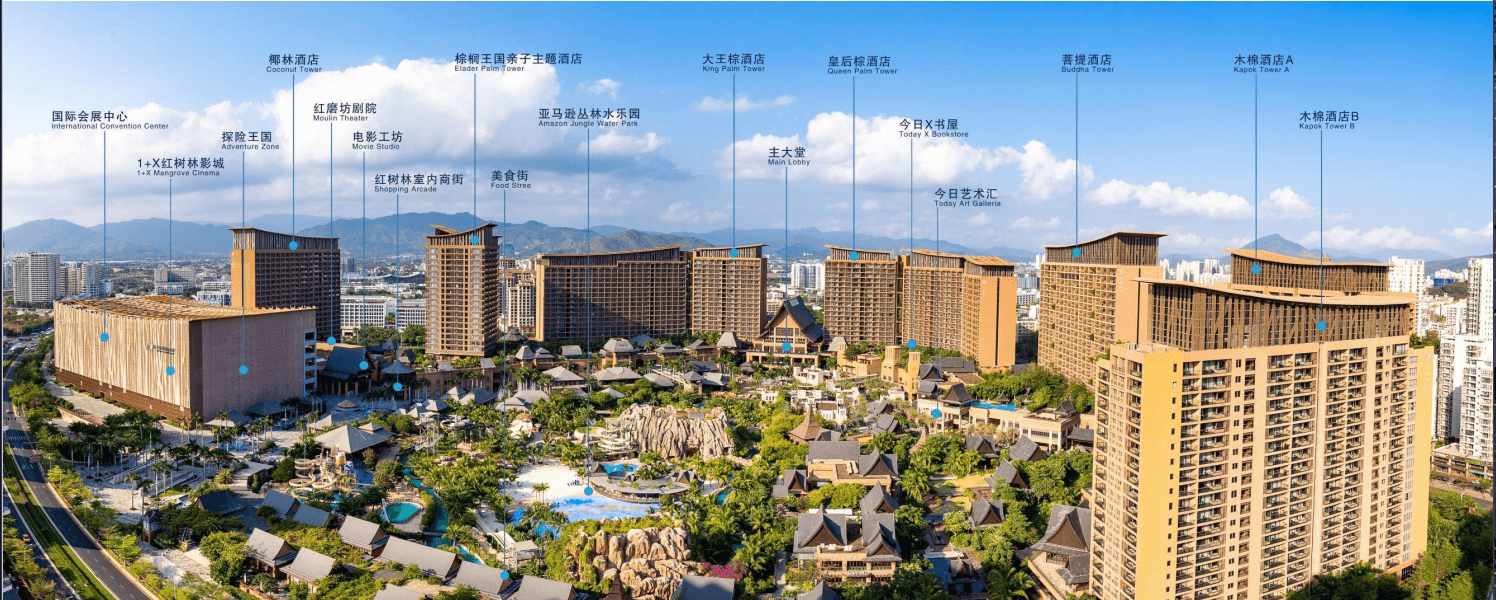

5. 提交者将有机会参加四个会议:Chips 2023、OpenCS 2023、Bench 2023和IC 2023。他们将有机会与国际同行进行广泛交流。

6. 选定的成就将在会议场地展示。具体时间段(通常不超过一天)和地点需要与会议组织者协调。

7. 成就的提交者有机会在会议上展示他们的工作,具体安排将根据进一步评估和会议组织者的安排而定。

8. 被邀请在会议上展示的优秀成就可能有机会在委员会评估和同行认可后,被纳入年度世界贡献版(最终版本)。委员会为芯片、人工智能、开源、基准与评价每个领域预留了20个名额。

9. 经受住时间考验的典范成就有机会被纳入世纪版本的世界贡献版(公开征集意见版)。

10. 成就的提交者可以申请差旅资助。

国际测试委员会奖励概览

征集成果的范围:

芯片领域(范围包括但不限于:系统设计,逻辑设计,物理设计,时序设计,验证与仿真,模拟器,辅助设计工具,超导、量子等新兴芯片,新兴加速器,半导体材料,光刻技术,电路,封装技术)。

人工智能(范围包括但不限于:机器学习,强化学习,自然语言处理,图像分析,视频分析,数据挖掘,推荐系统,知识表示和推理,医学图像处理,多模态信息融合,群体智能,智能机器人和系统,智能控制,智慧医疗,人工智能与天文学,人工智能与高能物理,人工智能与空间科学,人工智能与交通,人工智能与海洋,人工智能与安全,人工智能与法学,人工智能与金融,人工智能与传统工业,人工智能伦理与治理,人工智能与大数据,人工智能与材料,人工智能与民航应用,人工智能与商业管理)。

开源(范围包括但不限于:芯片、操作系统、容器化和虚拟化、编译器、数据管理、网络、编程语言和编译器、系统和框架、基本库、监控和优化工具、大数据、AI、云计算、HPC、区块链、LLM、自动驾驶、数字孪生、数联网、隐私和安全、数据集、教育)。

测试基准与评价(范围包括但不限于:计量经济学、临床医学评估、药物评价、商业和金融基准、人工智能评价、HPC基准、数据库基准、内存基准、网络基准、硬件与磁盘基准、图基准、人力资源评价、教育学评价和指标体系建立)。

成果征集过程:

- 1. 请将成果提交至邮箱 benchcouncil.evaluation@gmail.com。材料不超过一页纸,包括成果描述和支撑材料(包含成果链接),并提供贡献人和联系人信息。

- 2. 初步评估后,将通过邮件通知,评估时间不超过1个工作日,材料请尽量简洁。

- 3. 在Bench 2023和IC 2023发表论文的,提交支撑材料后,成果自动入选。

- 4. 成果提交者需签署参会和安全提示书。同时至少需要一人以常规方式注册会议、参加会议,并在一个特定时间段展示成果(海报,不超过一天)。学生提交成果可以以学生身份注册会议。

成果评价标准:

- 1. 求真务实,不问成果出处!中英文均可,但建议同时提供中英文。

- 2. 成果的形式包括但不限于发表或者预印的论文、开源代码、产品或服务;

- 3. 为了鼓励更多的人参与交流,成果录用率不低于80%。

注册

在被选中后,至少需要有一个注册会议。

请将款项转入以下银行账户。

- • 账户名称: HONG KONG AI AND CHIP BENCHMARK RESEARCH LIMITED

- • 账号: 801-670811-838

- • 账号地址: 6/F Manulife Place, 348 Kwun Tong Road, Kowloon, Hong Kong.

- • 银行名称: The Hongkong and Shanghai Banking Corporation Limited

- • 银行地址: HSBC Main Building, 1 Queen's Road Central, Central, Victoria City, Hong Kong.

- • SWIFT代码: HSBCHKHHHKH

此外,您也可以通过以下注册链接使用信用卡付款: https://eur.cvent.me/5qQEq

1. FICC 2023的1个标准注册。

- • 11月25日之前为490美元,11月25日之后为550美元。

- • 或者1个简单注册,不包括论文发表(不包含餐饮)

- • 11月25日之前为320美元,11月25日之后为360美元。

2. 1天的标准展位。

此外,我们为进入Top 100成就排名榜单 (AI100, Chip100, Open100, Bench100).的学术或工业机构提供升级服务(注册链接为https://eur.cvent.me/k85BK)。注册升级服务的机构可以在FICC 2023会议上享受额外的福利。注册人数、主题演讲时长和展位空间的数量将根据您是来自学术界还是工业界而有所不同。此外,我们还可根据要求提供定制服务。

默认的升级服务如下:

学术机构升级服务:

- • 学术机构铜牌服务:

- 1000美元。

- FICC 2023的2个免费注册。

- 20分钟的常规会场演讲。

- 为期2天的双标准展位空间。

- • 学术机构银牌服务:

- 3000美元。

- FICC 2023的4个免费注册。

- 20分钟的常规会场演讲。

- 为期4天的双标准展位空间。

- 5页评估报告。请访问网页/evaluation/获取更多信息。

- • 学术机构金牌服务:

- 4000美元。

- FICC 2023的4个免费注册。

- 30分钟的主要会议。

- 为期4天的双标准展位空间。

- 5页评估报告。请访问网页/evaluation/获取更多信息。

- • 学术机构白金服务:

- 5000美元。

- FICC 2023的6个免费注册。

- 30分钟的全体会议。

- 为期4天的双标准展位空间。

- 5页评估报告。请访问网页/evaluation/获取更多信息。

工业界升级服务:

- • 工业界铜牌服务:

- 2000美元。

- FICC 2023的2个免费注册。

- 20分钟的常规会议。

- 为期2天的双标准展位空间。

- • 工业界银牌服务:

- 4000美元。

- FICC 2023的4个免费注册。

- 20分钟的常规会议。

- 为期4天的双标准展位空间。

- • 工业界金牌服务:

- 8000美元。

- FICC 2023的6个免费注册。

- 30分钟的主要会议。

- 为期4天的双标准展位空间。

- • 工业界白金服务:

- 10000美元。

- FICC 2023的8个免费注册。

- 30分钟的全体会议。

- 为期4天的四个标准尺寸展览空间。